[Review] The Rise of HBF (High Bandwidth Flash): The Savior to Break AI's Memory Capacity Wall?

Introduction & Breaking News

As LLM sequence lengths surge into the 10 million (10M) token era, AI infrastructure is hitting a severe “memory capacity wall.” Amidst this crisis, SK hynix and SanDisk officially announced their joint development of HBF (High Bandwidth Flash) today, sending ripples through the industry. This slow but massive-capacity NAND flash has rapidly emerged as the new alternative for AI memory.

This article synthesizes six core discussions currently dominating the engineering and investment communities—ranging from today’s breaking news and HBF’s architecture, to its inevitable integration with optical technologies, market dynamics, and realistic engineering hurdles.

0. < [Official] SK hynix & SanDisk Formalize HBF Joint Development >

Core Abstract: SK hynix officially announced a strategic partnership and joint development of HBF with SanDisk. This signifies that HBF has moved beyond an academic concept and is now firmly embedded in the roadmaps of two memory giants, with a clear path to commercialization.

Key Insight: “To solve the memory bottleneck in AI infrastructure, the absolute leader in HBM (SK hynix) has joined forces with the pioneer of NAND technology (SanDisk). HBF has officially transitioned from the realm of ‘possibility’ to a concrete timeline of ‘execution’.” (Ref: SK hynix Official Announcement)

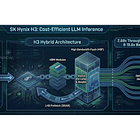

1. < The H³ Architecture: A Hybrid Solution of HBM and HBF >

Core Abstract: To address the extreme HBM capacity shortage in the 10M token era, SK hynix proposed the H³ architecture, which connects HBM and HBF via a daisy-chain configuration. The strategy involves masking NAND’s critical access latency with a 40MB SRAM-based Latency Hiding Buffer (LHB) and placing static, read-only data (like pre-computed KV caches) in the HBF.

Key Insight: “Simulation results demonstrate that the H³ structure processes up to 18.8x larger batch sizes in 10M sequence scenarios compared to an HBM-only setup, while proving a 2.69x higher power efficiency.” (Ref: SK hynix H³ Paper Summary, PhotonCap)

2. < HBF’s Realistic Limits and the Walls to Overcome >

Core Abstract: While the H³ simulation results are impressive, the barriers from an engineering perspective remain high. The assumption of “purely read-only workloads” is highly restrictive in actual production environments. Furthermore, integrating SRAM, complex controllers, and heterogeneous TSV packaging means HBF will not remain “cheap NAND.” The rapid maturation of alternatives like HBM4 and CXL-based memory pooling also presents fierce competition.

Key Insight: “The 1-2 order of magnitude latency difference inherent to physical NAND cells cannot be entirely masked by architecture. HBF is not a silver bullet, but rather a purpose-built solution highly likely to fill the niche between HBM and SSDs.” (Ref: HBF’s Limits and Reality,@damnang2)

3. < The Memory Supremacy War: Next to the GPU or Across the Network? >

Core Abstract: A fierce architectural battle has begun between NVIDIA and memory manufacturers over HBF. While players like SK hynix and SanDisk aim to place HBF right next to the GPU (on the interposer) to maximize memory vendors’ leverage, NVIDIA seeks to maintain control by keeping it across the network, akin to its ICMS (Inference Context Memory Storage) approach.

Key Insight: “Ultimately, the deciding factors will be ‘heat’ and ‘lifespan.’ HBF’s fate rests on proving that the ‘stone tablet’ (NAND) can survive right next to a 1,000W ‘furnace’ (GPU).” (Ref: HBF: Savior or Mirage for AI Memory?, @NuttyCLD)

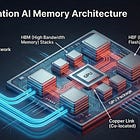

4. < Bandwidth Expansion and the Inevitability of Optical Interconnects >

Core Abstract: The introduction of HBF triggers an explosion in data density, reaching terabyte-scale bandwidths within a single package. Attempting to handle this increased bandwidth with conventional copper connections leads to severe power waste and signal integrity degradation. Ultimately, to transmit HBF’s massive “storage” bandwidth to the processor without bottlenecks, the adoption of optical technologies like CPO (Co-Packaged Optics) is non-negotiable.

Key Insight: “If HBF is like fully opening a dam’s floodgates, Optics is the massive waterway that transports the surging data without bottlenecks. As ‘Data Gravity’ intensifies, the value of the optical ecosystem explodes.” (Ref: HBF and Optics, PhotonCap)

5. < Topology Innovation: Optical SSD Shattering the Distance Barrier >

Core Abstract: The hidden killer bottleneck in the HBM/HBF era is physical “distance.” To maximize bandwidth and power efficiency while physically separating storage from the GPU, replacing electrical links with optical ones—the Optical SSD—is the ultimate answer. This is the key to fully liberating storage placement in the data center (Disaggregation).

Key Insight: “Optics doesn’t just make SSDs ‘faster.’ It eliminates distance penalties, unlocking the ‘freedom of placement’ for storage. The higher the Retimer Tax, the more Optics wins.” (Ref: Optical SSD Topology Unlock, @BSkypia59338)

Conclusion

HBF is an inevitable endeavor to break through the “capacity wall” facing AI infrastructure. Its most formidable weapons are its overwhelming terabyte-scale capacity—which HBM simply cannot match—and its relatively low cost. However, it may look better on paper than in reality. The harsh engineering limitations and drawbacks are glaringly clear: the nearly insurmountable latency gap inherent to its NAND origins, the massive thermal management challenges of sitting next to an ultra-hot GPU, and the need for rigorous long-term durability validation.

Whether HBF manages to clear these high hurdles to successfully land as a new memory tier right next to the GPU, or whether it settles into a realistic compromise as a high-speed storage pool across the network, the ultimate takeaway remains exactly the same. To realize a next-generation architecture where the flood of terabyte-scale data churned out by countless HBFs flows freely between nodes without bottlenecks, it must be accompanied by an “Optics-based interconnect innovation” that shatters the physical limits of distance and power inherent to traditional copper wiring.